一、本文介绍

本文给大家带来的是主干网络 RevColV1 ,翻译过来就是可逆列网络,其是一种新型的 神经网络 设计 (和以前的网络结构的传播方式不太一样) ,由多个 子网络 (列)通过多级可逆连接组成。这种设计允许在前向传播过程中特征解耦,保持总信息无压缩或丢弃。其非常适合数据集庞大的目标检测任务, 数据集数量越多其效果性能越好 ,亲测在包含1000个图片的数据集上其涨点效果就非常明显了,大家可以多动手尝试, 其RevColV2的论文同时已经发布如果代码开源我也会第一时间给大家上传。

目录

二、RevColV1的框架原理

官方论文地址: 官方论文地址

官方代码地址: 官方代码地址

2.1 RevColV1的基本原理

RevCol的主要原理和思想是利用可逆连接来设计网络结构,允许信息在网络的不同分支(列)间自由流动而不丢失。这种多列结构在 前向传播 过程中逐渐解耦特征,并保持全部信息,而不是进行压缩或舍弃。这样的设计提高了网络在图像分类、对象检测和语义分割等 计算机视觉 任务中的表现,尤其是在参数量大和数据集大时。

RevCol的创新点我将其总结为以下几点:

1. 可逆连接设计:通过多个子网络(列)间的可逆连接,保证信息在前向传播过程中不丢失。

2. 特征解耦:在每个列中,特征逐渐被解耦,保持总信息而非压缩或舍弃。

3. 适用于大型数据集和参数:在大型数据集和高参数预算下表现出色。

4. 跨模型应用:可作为宏架构方式,应用于变换器或其他神经网络,改善计算机视觉和NLP任务的性能。

简单总结: RevCol通过其独特的多列结构和可逆连接设计,使得网络能够在处理信息时保持完整性,提高特征处理的效率。这种架构在数据丰富且复杂的情况下尤为有效,且可灵活应用于不同类型的 神经网络模型 中。

其中的创新点第四点不用叙述了,网络结构可以应用于我们的 YOLOv8 就是最好的印证。

这是论文中的图片1,展示了传统单列网络(a)与RevCol(b)的信息传播对比。在图(a)中,信息通过一个接一个的层线性传播,每层处理后传递给下一层直至输出。而在图(b)中,RevCol通过多个

并行

列(Col 1 到 Col N)处理信息,其中可逆连接(蓝色曲线)允许信息在列间传递,保持低级别和语义级别的信息传播。这种结构有助于整个网络维持更丰富的信息,并且每个列都能从其他列中学习到信息,增强了特征的表达和网络的学习能力

(但是这种做法导致模型的参数量非常巨大,而且训练速度缓慢计算量比较大)。

这是论文中的图片1,展示了传统单列网络(a)与RevCol(b)的信息传播对比。在图(a)中,信息通过一个接一个的层线性传播,每层处理后传递给下一层直至输出。而在图(b)中,RevCol通过多个

并行

列(Col 1 到 Col N)处理信息,其中可逆连接(蓝色曲线)允许信息在列间传递,保持低级别和语义级别的信息传播。这种结构有助于整个网络维持更丰富的信息,并且每个列都能从其他列中学习到信息,增强了特征的表达和网络的学习能力

(但是这种做法导致模型的参数量非常巨大,而且训练速度缓慢计算量比较大)。

2.1.1 可逆连接设计

在RevCol中的可逆连接设计允许多个子网络(称为列)之间进行信息的双向流动。这意味着在前向传播的过程中,每一列都能接收到前一列的信息,并将自己的处理结果传递给下一列,同时能够保留传递过程中的所有信息。这种设计避免了在传统的深度网络中常见的信息丢失问题,特别是在网络层次较深时。因此,RevCol可以在深层网络中维持丰富的特征表示,从而提高了模型对数据的表示能力和学习效率。

这张图片展示了RevCol网络的不同组成部分和信息流动方式。

- 图 (a) 展示了RevNet中的一个可逆单元,标识了不同时间步长的状态。

- 图 (b) 展示了多级可逆单元,所有输入在不同级别上进行信息交换。

- 图 (c) 提供了整个可逆列网络架构的概览,其中包含了简化的多级可逆单元。

整个设计允许信息在网络的不同层级和列之间自由流动,而不会丢失任何信息,这对于深层网络的学习和特征提取是非常有益的 (我觉得这里有点类似于Neck部分允许层级之间相互交流信息) 。

2.1.2 特征解耦

特征解耦是指在RevCol网络的每个子网络(列)中,特征通过可逆连接传递,同时独立地进行处理和学习。这样,每个列都能保持输入信息的完整性,而不会像传统的深度网络那样,在层与层之间传递时压缩或丢弃信息。随着信息在列中的前进,特征之间的关联性逐渐减弱(解耦),使得网络能够更细致地捕捉并强调重要的特征,这有助于提高模型在复杂任务上的性能和泛化能力。

这张图展示了RevCol网络的一个级别(Level l)的微观设计,以及特征融合模块(Fusion Block)的设计。在图(a)中,展示了ConvNeXt级别的标准结构,包括下采样块和残差块。图(b)中的RevCol级别包含了融合模块、残差块和可逆操作。这里的特征解耦是通过融合模块实现的,该模块接收相邻级别的特征图

,

,

作为输入,并将它们融合以生成新的特征表示。这样,不同级别的特征在融合过程中被解耦,每个级别维持其信息而不压缩或舍弃。图(c)详细描述了融合模块的内部结构,它通过上采样和下采样操作处理不同分辨率的特征图,然后将它们线性叠加,形成为ConvNeXt块提供的特征。这种设计让特征在不同分辨率间流动时进行有效融合。

作为输入,并将它们融合以生成新的特征表示。这样,不同级别的特征在融合过程中被解耦,每个级别维持其信息而不压缩或舍弃。图(c)详细描述了融合模块的内部结构,它通过上采样和下采样操作处理不同分辨率的特征图,然后将它们线性叠加,形成为ConvNeXt块提供的特征。这种设计让特征在不同分辨率间流动时进行有效融合。

2.2 RevColV1的表现

这张图片展示了伴随着FLOPs的增长TOP1的准确率情况,可以看出RevColV1伴随着FLOPs的增加效果逐渐明显。

三、RevColV1的核心代码

下面的代码是RevColV1的全部代码,其中包含多个版本,但是大家需要注意这个模型训练非常耗时,参数量非常大,但是其特点就是参数量越大效果越好。其使用方式看章节四。

- # --------------------------------------------------------

- # Reversible Column Networks

- # Copyright (c) 2022 Megvii Inc.

- # Licensed under The Apache License 2.0 [see LICENSE for details]

- # Written by Yuxuan Cai

- # --------------------------------------------------------

- from typing import Tuple, Any, List

- from timm.models.layers import trunc_normal_

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

- from timm.models.layers import DropPath

-

- __all__ = ['revcol_tiny', 'revcol_small', 'revcol_base', 'revcol_large', 'revcol_xlarge']

-

- class UpSampleConvnext(nn.Module):

- def __init__(self, ratio, inchannel, outchannel):

- super().__init__()

- self.ratio = ratio

- self.channel_reschedule = nn.Sequential(

- # LayerNorm(inchannel, eps=1e-6, data_format="channels_last"),

- nn.Linear(inchannel, outchannel),

- LayerNorm(outchannel, eps=1e-6, data_format="channels_last"))

- self.upsample = nn.Upsample(scale_factor=2 ** ratio, mode='nearest')

-

- def forward(self, x):

- x = x.permute(0, 2, 3, 1)

- x = self.channel_reschedule(x)

- x = x = x.permute(0, 3, 1, 2)

-

- return self.upsample(x)

-

-

- class LayerNorm(nn.Module):

- r""" LayerNorm that supports two data formats: channels_last (default) or channels_first.

- The ordering of the dimensions in the inputs. channels_last corresponds to inputs with

- shape (batch_size, height, width, channels) while channels_first corresponds to inputs

- with shape (batch_size, channels, height, width).

- """

-

- def __init__(self, normalized_shape, eps=1e-6, data_format="channels_first", elementwise_affine=True):

- super().__init__()

- self.elementwise_affine = elementwise_affine

- if elementwise_affine:

- self.weight = nn.Parameter(torch.ones(normalized_shape))

- self.bias = nn.Parameter(torch.zeros(normalized_shape))

- self.eps = eps

- self.data_format = data_format

- if self.data_format not in ["channels_last", "channels_first"]:

- raise NotImplementedError

- self.normalized_shape = (normalized_shape,)

-

- def forward(self, x):

- if self.data_format == "channels_last":

- return F.layer_norm(x, self.normalized_shape, self.weight, self.bias, self.eps)

- elif self.data_format == "channels_first":

- u = x.mean(1, keepdim=True)

- s = (x - u).pow(2).mean(1, keepdim=True)

- x = (x - u) / torch.sqrt(s + self.eps)

- if self.elementwise_affine:

- x = self.weight[:, None, None] * x + self.bias[:, None, None]

- return x

-

-

- class ConvNextBlock(nn.Module):

- r""" ConvNeXt Block. There are two equivalent implementations:

- (1) DwConv -> LayerNorm (channels_first) -> 1x1 Conv -> GELU -> 1x1 Conv; all in (N, C, H, W)

- (2) DwConv -> Permute to (N, H, W, C); LayerNorm (channels_last) -> Linear -> GELU -> Linear; Permute back

- We use (2) as we find it slightly faster in PyTorch

- Args:

- dim (int): Number of input channels.

- drop_path (float): Stochastic depth rate. Default: 0.0

- layer_scale_init_value (float): Init value for Layer Scale. Default: 1e-6.

- """

-

- def __init__(self, in_channel, hidden_dim, out_channel, kernel_size=3, layer_scale_init_value=1e-6, drop_path=0.0):

- super().__init__()

- self.dwconv = nn.Conv2d(in_channel, in_channel, kernel_size=kernel_size, padding=(kernel_size - 1) // 2,

- groups=in_channel) # depthwise conv

- self.norm = nn.LayerNorm(in_channel, eps=1e-6)

- self.pwconv1 = nn.Linear(in_channel, hidden_dim) # pointwise/1x1 convs, implemented with linear layers

- self.act = nn.GELU()

- self.pwconv2 = nn.Linear(hidden_dim, out_channel)

- self.gamma = nn.Parameter(layer_scale_init_value * torch.ones((out_channel)),

- requires_grad=True) if layer_scale_init_value > 0 else None

- self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

-

- def forward(self, x):

- input = x

- x = self.dwconv(x)

- x = x.permute(0, 2, 3, 1) # (N, C, H, W) -> (N, H, W, C)

- x = self.norm(x)

- x = self.pwconv1(x)

- x = self.act(x)

- # print(f"x min: {x.min()}, x max: {x.max()}, input min: {input.min()}, input max: {input.max()}, x mean: {x.mean()}, x var: {x.var()}, ratio: {torch.sum(x>8)/x.numel()}")

- x = self.pwconv2(x)

- if self.gamma is not None:

- x = self.gamma * x

- x = x.permute(0, 3, 1, 2) # (N, H, W, C) -> (N, C, H, W)

-

- x = input + self.drop_path(x)

- return x

-

-

- class Decoder(nn.Module):

- def __init__(self, depth=[2, 2, 2, 2], dim=[112, 72, 40, 24], block_type=None, kernel_size=3) -> None:

- super().__init__()

- self.depth = depth

- self.dim = dim

- self.block_type = block_type

- self._build_decode_layer(dim, depth, kernel_size)

- self.projback = nn.Sequential(

- nn.Conv2d(

- in_channels=dim[-1],

- out_channels=4 ** 2 * 3, kernel_size=1),

- nn.PixelShuffle(4),

- )

-

- def _build_decode_layer(self, dim, depth, kernel_size):

- normal_layers = nn.ModuleList()

- upsample_layers = nn.ModuleList()

- proj_layers = nn.ModuleList()

-

- norm_layer = LayerNorm

-

- for i in range(1, len(dim)):

- module = [self.block_type(dim[i], dim[i], dim[i], kernel_size) for _ in range(depth[i])]

- normal_layers.append(nn.Sequential(*module))

- upsample_layers.append(nn.Upsample(scale_factor=2, mode='bilinear', align_corners=True))

- proj_layers.append(nn.Sequential(

- nn.Conv2d(dim[i - 1], dim[i], 1, 1),

- norm_layer(dim[i]),

- nn.GELU()

- ))

- self.normal_layers = normal_layers

- self.upsample_layers = upsample_layers

- self.proj_layers = proj_layers

-

- def _forward_stage(self, stage, x):

- x = self.proj_layers[stage](x)

- x = self.upsample_layers[stage](x)

- return self.normal_layers[stage](x)

-

- def forward(self, c3):

- x = self._forward_stage(0, c3) # 14

- x = self._forward_stage(1, x) # 28

- x = self._forward_stage(2, x) # 56

- x = self.projback(x)

- return x

-

-

- class SimDecoder(nn.Module):

- def __init__(self, in_channel, encoder_stride) -> None:

- super().__init__()

- self.projback = nn.Sequential(

- LayerNorm(in_channel),

- nn.Conv2d(

- in_channels=in_channel,

- out_channels=encoder_stride ** 2 * 3, kernel_size=1),

- nn.PixelShuffle(encoder_stride),

- )

-

- def forward(self, c3):

- return self.projback(c3)

-

-

- def get_gpu_states(fwd_gpu_devices) -> Tuple[List[int], List[torch.Tensor]]:

- # This will not error out if "arg" is a CPU tensor or a non-tensor type because

- # the conditionals short-circuit.

- fwd_gpu_states = []

- for device in fwd_gpu_devices:

- with torch.cuda.device(device):

- fwd_gpu_states.append(torch.cuda.get_rng_state())

-

- return fwd_gpu_states

-

-

- def get_gpu_device(*args):

- fwd_gpu_devices = list(set(arg.get_device() for arg in args

- if isinstance(arg, torch.Tensor) and arg.is_cuda))

- return fwd_gpu_devices

-

-

- def set_device_states(fwd_cpu_state, devices, states) -> None:

- torch.set_rng_state(fwd_cpu_state)

- for device, state in zip(devices, states):

- with torch.cuda.device(device):

- torch.cuda.set_rng_state(state)

-

-

- def detach_and_grad(inputs: Tuple[Any, ...]) -> Tuple[torch.Tensor, ...]:

- if isinstance(inputs, tuple):

- out = []

- for inp in inputs:

- if not isinstance(inp, torch.Tensor):

- out.append(inp)

- continue

-

- x = inp.detach()

- x.requires_grad = True

- out.append(x)

- return tuple(out)

- else:

- raise RuntimeError(

- "Only tuple of tensors is supported. Got Unsupported input type: ", type(inputs).__name__)

-

-

- def get_cpu_and_gpu_states(gpu_devices):

- return torch.get_rng_state(), get_gpu_states(gpu_devices)

-

-

- class ReverseFunction(torch.autograd.Function):

- @staticmethod

- def forward(ctx, run_functions, alpha, *args):

- l0, l1, l2, l3 = run_functions

- alpha0, alpha1, alpha2, alpha3 = alpha

- ctx.run_functions = run_functions

- ctx.alpha = alpha

- ctx.preserve_rng_state = True

-

- ctx.gpu_autocast_kwargs = {"enabled": torch.is_autocast_enabled(),

- "dtype": torch.get_autocast_gpu_dtype(),

- "cache_enabled": torch.is_autocast_cache_enabled()}

- ctx.cpu_autocast_kwargs = {"enabled": torch.is_autocast_cpu_enabled(),

- "dtype": torch.get_autocast_cpu_dtype(),

- "cache_enabled": torch.is_autocast_cache_enabled()}

-

- assert len(args) == 5

- [x, c0, c1, c2, c3] = args

- if type(c0) == int:

- ctx.first_col = True

- else:

- ctx.first_col = False

- with torch.no_grad():

- gpu_devices = get_gpu_device(*args)

- ctx.gpu_devices = gpu_devices

- ctx.cpu_states_0, ctx.gpu_states_0 = get_cpu_and_gpu_states(gpu_devices)

- c0 = l0(x, c1) + c0 * alpha0

- ctx.cpu_states_1, ctx.gpu_states_1 = get_cpu_and_gpu_states(gpu_devices)

- c1 = l1(c0, c2) + c1 * alpha1

- ctx.cpu_states_2, ctx.gpu_states_2 = get_cpu_and_gpu_states(gpu_devices)

- c2 = l2(c1, c3) + c2 * alpha2

- ctx.cpu_states_3, ctx.gpu_states_3 = get_cpu_and_gpu_states(gpu_devices)

- c3 = l3(c2, None) + c3 * alpha3

- ctx.save_for_backward(x, c0, c1, c2, c3)

- return x, c0, c1, c2, c3

-

- @staticmethod

- def backward(ctx, *grad_outputs):

- x, c0, c1, c2, c3 = ctx.saved_tensors

- l0, l1, l2, l3 = ctx.run_functions

- alpha0, alpha1, alpha2, alpha3 = ctx.alpha

- gx_right, g0_right, g1_right, g2_right, g3_right = grad_outputs

- (x, c0, c1, c2, c3) = detach_and_grad((x, c0, c1, c2, c3))

-

- with torch.enable_grad(), \

- torch.random.fork_rng(devices=ctx.gpu_devices, enabled=ctx.preserve_rng_state), \

- torch.cuda.amp.autocast(**ctx.gpu_autocast_kwargs), \

- torch.cpu.amp.autocast(**ctx.cpu_autocast_kwargs):

-

- g3_up = g3_right

- g3_left = g3_up * alpha3 ##shortcut

- set_device_states(ctx.cpu_states_3, ctx.gpu_devices, ctx.gpu_states_3)

- oup3 = l3(c2, None)

- torch.autograd.backward(oup3, g3_up, retain_graph=True)

- with torch.no_grad():

- c3_left = (1 / alpha3) * (c3 - oup3) ## feature reverse

- g2_up = g2_right + c2.grad

- g2_left = g2_up * alpha2 ##shortcut

-

- (c3_left,) = detach_and_grad((c3_left,))

- set_device_states(ctx.cpu_states_2, ctx.gpu_devices, ctx.gpu_states_2)

- oup2 = l2(c1, c3_left)

- torch.autograd.backward(oup2, g2_up, retain_graph=True)

- c3_left.requires_grad = False

- cout3 = c3_left * alpha3 ##alpha3 update

- torch.autograd.backward(cout3, g3_up)

-

- with torch.no_grad():

- c2_left = (1 / alpha2) * (c2 - oup2) ## feature reverse

- g3_left = g3_left + c3_left.grad if c3_left.grad is not None else g3_left

- g1_up = g1_right + c1.grad

- g1_left = g1_up * alpha1 ##shortcut

-

- (c2_left,) = detach_and_grad((c2_left,))

- set_device_states(ctx.cpu_states_1, ctx.gpu_devices, ctx.gpu_states_1)

- oup1 = l1(c0, c2_left)

- torch.autograd.backward(oup1, g1_up, retain_graph=True)

- c2_left.requires_grad = False

- cout2 = c2_left * alpha2 ##alpha2 update

- torch.autograd.backward(cout2, g2_up)

-

- with torch.no_grad():

- c1_left = (1 / alpha1) * (c1 - oup1) ## feature reverse

- g0_up = g0_right + c0.grad

- g0_left = g0_up * alpha0 ##shortcut

- g2_left = g2_left + c2_left.grad if c2_left.grad is not None else g2_left ## Fusion

-

- (c1_left,) = detach_and_grad((c1_left,))

- set_device_states(ctx.cpu_states_0, ctx.gpu_devices, ctx.gpu_states_0)

- oup0 = l0(x, c1_left)

- torch.autograd.backward(oup0, g0_up, retain_graph=True)

- c1_left.requires_grad = False

- cout1 = c1_left * alpha1 ##alpha1 update

- torch.autograd.backward(cout1, g1_up)

-

- with torch.no_grad():

- c0_left = (1 / alpha0) * (c0 - oup0) ## feature reverse

- gx_up = x.grad ## Fusion

- g1_left = g1_left + c1_left.grad if c1_left.grad is not None else g1_left ## Fusion

- c0_left.requires_grad = False

- cout0 = c0_left * alpha0 ##alpha0 update

- torch.autograd.backward(cout0, g0_up)

-

- if ctx.first_col:

- return None, None, gx_up, None, None, None, None

- else:

- return None, None, gx_up, g0_left, g1_left, g2_left, g3_left

-

-

- class Fusion(nn.Module):

- def __init__(self, level, channels, first_col) -> None:

- super().__init__()

-

- self.level = level

- self.first_col = first_col

- self.down = nn.Sequential(

- nn.Conv2d(channels[level - 1], channels[level], kernel_size=2, stride=2),

- LayerNorm(channels[level], eps=1e-6, data_format="channels_first"),

- ) if level in [1, 2, 3] else nn.Identity()

- if not first_col:

- self.up = UpSampleConvnext(1, channels[level + 1], channels[level]) if level in [0, 1, 2] else nn.Identity()

-

- def forward(self, *args):

-

- c_down, c_up = args

-

- if self.first_col:

- x = self.down(c_down)

- return x

-

- if self.level == 3:

- x = self.down(c_down)

- else:

- x = self.up(c_up) + self.down(c_down)

- return x

-

-

- class Level(nn.Module):

- def __init__(self, level, channels, layers, kernel_size, first_col, dp_rate=0.0) -> None:

- super().__init__()

- countlayer = sum(layers[:level])

- expansion = 4

- self.fusion = Fusion(level, channels, first_col)

- modules = [ConvNextBlock(channels[level], expansion * channels[level], channels[level], kernel_size=kernel_size,

- layer_scale_init_value=1e-6, drop_path=dp_rate[countlayer + i]) for i in

- range(layers[level])]

- self.blocks = nn.Sequential(*modules)

-

- def forward(self, *args):

- x = self.fusion(*args)

- x = self.blocks(x)

- return x

-

-

- class SubNet(nn.Module):

- def __init__(self, channels, layers, kernel_size, first_col, dp_rates, save_memory) -> None:

- super().__init__()

- shortcut_scale_init_value = 0.5

- self.save_memory = save_memory

- self.alpha0 = nn.Parameter(shortcut_scale_init_value * torch.ones((1, channels[0], 1, 1)),

- requires_grad=True) if shortcut_scale_init_value > 0 else None

- self.alpha1 = nn.Parameter(shortcut_scale_init_value * torch.ones((1, channels[1], 1, 1)),

- requires_grad=True) if shortcut_scale_init_value > 0 else None

- self.alpha2 = nn.Parameter(shortcut_scale_init_value * torch.ones((1, channels[2], 1, 1)),

- requires_grad=True) if shortcut_scale_init_value > 0 else None

- self.alpha3 = nn.Parameter(shortcut_scale_init_value * torch.ones((1, channels[3], 1, 1)),

- requires_grad=True) if shortcut_scale_init_value > 0 else None

-

- self.level0 = Level(0, channels, layers, kernel_size, first_col, dp_rates)

-

- self.level1 = Level(1, channels, layers, kernel_size, first_col, dp_rates)

-

- self.level2 = Level(2, channels, layers, kernel_size, first_col, dp_rates)

-

- self.level3 = Level(3, channels, layers, kernel_size, first_col, dp_rates)

-

- def _forward_nonreverse(self, *args):

- x, c0, c1, c2, c3 = args

-

- c0 = (self.alpha0) * c0 + self.level0(x, c1)

- c1 = (self.alpha1) * c1 + self.level1(c0, c2)

- c2 = (self.alpha2) * c2 + self.level2(c1, c3)

- c3 = (self.alpha3) * c3 + self.level3(c2, None)

- return c0, c1, c2, c3

-

- def _forward_reverse(self, *args):

-

- local_funs = [self.level0, self.level1, self.level2, self.level3]

- alpha = [self.alpha0, self.alpha1, self.alpha2, self.alpha3]

- _, c0, c1, c2, c3 = ReverseFunction.apply(

- local_funs, alpha, *args)

-

- return c0, c1, c2, c3

-

- def forward(self, *args):

-

- self._clamp_abs(self.alpha0.data, 1e-3)

- self._clamp_abs(self.alpha1.data, 1e-3)

- self._clamp_abs(self.alpha2.data, 1e-3)

- self._clamp_abs(self.alpha3.data, 1e-3)

-

- if self.save_memory:

- return self._forward_reverse(*args)

- else:

- return self._forward_nonreverse(*args)

-

- def _clamp_abs(self, data, value):

- with torch.no_grad():

- sign = data.sign()

- data.abs_().clamp_(value)

- data *= sign

-

-

- class Classifier(nn.Module):

- def __init__(self, in_channels, num_classes):

- super().__init__()

-

- self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

- self.classifier = nn.Sequential(

- nn.LayerNorm(in_channels, eps=1e-6), # final norm layer

- nn.Linear(in_channels, num_classes),

- )

-

- def forward(self, x):

- x = self.avgpool(x)

- x = x.view(x.size(0), -1)

- x = self.classifier(x)

- return x

-

-

- class FullNet(nn.Module):

- def __init__(self, channels=[32, 64, 96, 128], layers=[2, 3, 6, 3], num_subnet=5, kernel_size=3, drop_path=0.0,

- save_memory=True, inter_supv=True) -> None:

- super().__init__()

- self.num_subnet = num_subnet

- self.inter_supv = inter_supv

- self.channels = channels

- self.layers = layers

-

- self.stem = nn.Sequential(

- nn.Conv2d(3, channels[0], kernel_size=4, stride=4),

- LayerNorm(channels[0], eps=1e-6, data_format="channels_first")

- )

-

- dp_rate = [x.item() for x in torch.linspace(0, drop_path, sum(layers))]

- for i in range(num_subnet):

- first_col = True if i == 0 else False

- self.add_module(f'subnet{str(i)}', SubNet(

- channels, layers, kernel_size, first_col, dp_rates=dp_rate, save_memory=save_memory))

-

- self.apply(self._init_weights)

- self.width_list = [i.size(1) for i in self.forward(torch.randn(1, 3, 640, 640))]

-

- def forward(self, x):

-

- c0, c1, c2, c3 = 0, 0, 0, 0

- x = self.stem(x)

- for i in range(self.num_subnet):

- c0, c1, c2, c3 = getattr(self, f'subnet{str(i)}')(x, c0, c1, c2, c3)

- return [c0, c1, c2, c3]

-

- def _init_weights(self, module):

- if isinstance(module, nn.Conv2d):

- trunc_normal_(module.weight, std=.02)

- nn.init.constant_(module.bias, 0)

- elif isinstance(module, nn.Linear):

- trunc_normal_(module.weight, std=.02)

- nn.init.constant_(module.bias, 0)

-

-

- ##-------------------------------------- Tiny -----------------------------------------

-

- def revcol_tiny(save_memory=True, inter_supv=True, drop_path=0.1, kernel_size=3):

- channels = [64, 128, 256, 512]

- layers = [2, 2, 4, 2]

- num_subnet = 4

- return FullNet(channels, layers, num_subnet, drop_path=drop_path, save_memory=save_memory, inter_supv=inter_supv,

- kernel_size=kernel_size)

-

-

- ##-------------------------------------- Small -----------------------------------------

-

- def revcol_small(save_memory=True, inter_supv=True, drop_path=0.3, kernel_size=3):

- channels = [64, 128, 256, 512]

- layers = [2, 2, 4, 2]

- num_subnet = 8

- return FullNet(channels, layers, num_subnet, drop_path=drop_path, save_memory=save_memory, inter_supv=inter_supv,

- kernel_size=kernel_size)

-

-

- ##-------------------------------------- Base -----------------------------------------

-

- def revcol_base(save_memory=True, inter_supv=True, drop_path=0.4, kernel_size=3, head_init_scale=None):

- channels = [72, 144, 288, 576]

- layers = [1, 1, 3, 2]

- num_subnet = 16

- return FullNet(channels, layers, num_subnet, drop_path=drop_path, save_memory=save_memory, inter_supv=inter_supv,

- kernel_size=kernel_size)

-

-

- ##-------------------------------------- Large -----------------------------------------

-

- def revcol_large(save_memory=True, inter_supv=True, drop_path=0.5, kernel_size=3, head_init_scale=None):

- channels = [128, 256, 512, 1024]

- layers = [1, 2, 6, 2]

- num_subnet = 8

- return FullNet(channels, layers, num_subnet, drop_path=drop_path, save_memory=save_memory, inter_supv=inter_supv,

- kernel_size=kernel_size)

-

-

- ##--------------------------------------Extra-Large -----------------------------------------

- def revcol_xlarge(save_memory=True, inter_supv=True, drop_path=0.5, kernel_size=3, head_init_scale=None):

- channels = [224, 448, 896, 1792]

- layers = [1, 2, 6, 2]

- num_subnet = 8

- return FullNet(channels, layers, num_subnet, drop_path=drop_path, save_memory=save_memory, inter_supv=inter_supv,

- kernel_size=kernel_size)

-

- # model = revcol_xlarge(True)

- # # 示例输入

- # input = torch.randn(64, 3, 224, 224)

- # output = model(input)

- #

- # print(len(output))#torch.Size([3, 64, 224, 224])

四、手把手教你添加RevColV1机制

这个主干的网络结构添加起来算是所有的改进机制里最麻烦的了,因为有一些网略结构可以用yaml文件搭建出来,有一些网络结构其中的一些细节根本没有办法用yaml文件去搭建,用yaml文件去搭建会损失一些细节部分(而且一个网络结构设计很多细节的结构修改方式都不一样,一个一个去修改大家难免会出错),所以这里让网络直接返回整个网络,然后修改部分 yolo代码以后就都以这种形式添加了,以后我提出的网络模型基本上都会通过这种方式修改,我也会进行一些模型细节改进。创新出新的网络结构大家直接拿来用就可以的。 下面开始添加教程->

(同时每一个后面都有代码,大家拿来复制粘贴替换即可,但是要看好了不要复制粘贴替换多了)

4.1 修改一

我们复制网络结构代码到“ ultralytics /nn”目录下创建一个py文件复制粘贴进去 ,我这里起的名字是RevColV1。

4.2 修改二

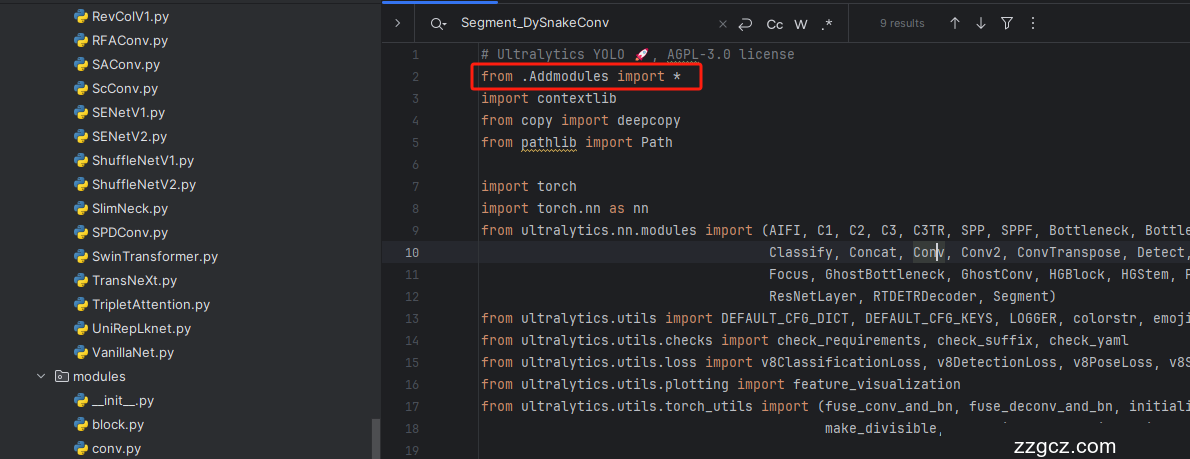

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( ,然后在其内部导入我们的检测头如下图所示。

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( !

4.4 修改四

添加如下两行代码!!!

4.5 修改五

找到七百多行大概把具体看图片,按照图片来修改就行,添加红框内的部分,注意没有()只是 函数 名,我这里只添加了部分的版本,大家有兴趣这个RevColV1还有更多的版本可以添加,看我给的代码函数头即可。

- elif m in {自行添加对应的模型即可,下面都是一样的}:

- m = m()

- c2 = m.width_list # 返回通道列表

- backbone = True

4.6 修改六

下面的两个红框内都是需要改动的。

- if isinstance(c2, list):

- m_ = m

- m_.backbone = True

- else:

- m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # module

- t = str(m)[8:-2].replace('__main__.', '') # module type

-

-

- m.np = sum(x.numel() for x in m_.parameters()) # number params

- m_.i, m_.f, m_.type = i + 4 if backbone else i, f, t # attach index, 'from' index, type

4.7 修改七

如下的也需要修改,全部按照我的来。

代码如下把原先的代码替换了即可。

- if verbose:

- LOGGER.info(f'{i:>3}{str(f):>20}{n_:>3}{m.np:10.0f} {t:<45}{str(args):<30}') # print

-

- save.extend(x % (i + 4 if backbone else i) for x in ([f] if isinstance(f, int) else f) if x != -1) # append to savelist

- layers.append(m_)

- if i == 0:

- ch = []

- if isinstance(c2, list):

- ch.extend(c2)

- if len(c2) != 5:

- ch.insert(0, 0)

- else:

- ch.append(c2)

4.8 修改八

修改七和前面的都不太一样,需要修改前向传播中的一个部分, 已经离开了parse_model方法了。

可以在图片中开代码行数,没有离开task.py文件都是同一个文件。 同时这个部分有好几个前向传播都很相似,大家不要看错了, 是70多行左右的!!!,同时我后面提供了代码,大家直接复制粘贴即可,有时间我针对这里会出一个视频。

代码如下->

- def _predict_once(self, x, profile=False, visualize=False, embed=None):

- """

- Perform a forward pass through the network.

- Args:

- x (torch.Tensor): The input tensor to the model.

- profile (bool): Print the computation time of each layer if True, defaults to False.

- visualize (bool): Save the feature maps of the model if True, defaults to False.

- embed (list, optional): A list of feature vectors/embeddings to return.

- Returns:

- (torch.Tensor): The last output of the model.

- """

- y, dt, embeddings = [], [], [] # outputs

- for m in self.model:

- if m.f != -1: # if not from previous layer

- x = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layers

- if profile:

- self._profile_one_layer(m, x, dt)

- if hasattr(m, 'backbone'):

- x = m(x)

- if len(x) != 5: # 0 - 5

- x.insert(0, None)

- for index, i in enumerate(x):

- if index in self.save:

- y.append(i)

- else:

- y.append(None)

- x = x[-1] # 最后一个输出传给下一层

- else:

- x = m(x) # run

- y.append(x if m.i in self.save else None) # save output

- if visualize:

- feature_visualization(x, m.type, m.i, save_dir=visualize)

- if embed and m.i in embed:

- embeddings.append(nn.functional.adaptive_avg_pool2d(x, (1, 1)).squeeze(-1).squeeze(-1)) # flatten

- if m.i == max(embed):

- return torch.unbind(torch.cat(embeddings, 1), dim=0)

- return x

到这里就完成了修改部分,但是这里面细节很多,大家千万要注意不要替换多余的代码,导致报错,也不要拉下任何一部,都会导致运行失败,而且报错很难排查!!!很难排查!!!

4.9 修改九

我们找到如下文件'ultralytics/utils/torch_utils.py'按照如下的图片进行修改,否则容易打印不出来计算量。

五、RevColV1的yaml文件

复制如下yaml文件进行运行!!!

此版本训练信息:YOLO11-RevColV1 summary: 632 layers, 31,831,739 parameters, 31,831,723 gradients, 77.9 GFLOPs

# 本文建议改进机制模型为YOLOv11-l的读者使用.

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

-

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

-

- # 共四个版本 "revcol_tiny, revcol_base, revcol_small, revcol_large, revcol_xlarge"

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, revcol_tiny, []] # 0-4 P1/2

- - [-1, 1, SPPF, [1024, 5]] # 5

- - [-1, 2, C2PSA, [1024]] # 6

-

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 3], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2, [512, False]] # 9

-

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 2], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2, [256, False]] # 12 (P3/8-small)

-

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 9], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2, [512, False]] # 15 (P4/16-medium)

-

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 6], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2, [1024, True]] # 18 (P5/32-large)

-

- - [[12, 15, 18], 1, Detect, [nc]] # Detect(P3, P4, P5)

六、成功运行记录

下面是成功运行的截图,已经完成了有1个epochs的训练,图片太大截不全第2个epochs了。

七、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充, 目前本专栏免费阅读(暂时,大家尽早关注不迷路~) , 如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~