一、本文介绍

本文给大家带来的最新改进机制是 DynamicHead(Dyhead) ,这个检测头由微软提出的一种名为 “ 动态头 ” 的新型检测头,用于统一尺度感知、空间感知和任务感知。网络上关于该检测头我查了一些有一些魔改的版本,但是我觉得其已经改变了该检测头的本质,因为往往一些细节上才能决定好的效果,我将官方的代码移植到了YOLOv11进行实验,同时该检测头有一些使用细节需要注意, 成功实现了大幅度的涨点! 所以检测头对于 模型 的精度提升是非常大的

同时本检测头发布的版本不同于网络上的其他魔改版本不要用其它版本的效果

欢迎大家订阅我的专栏一起学习YOLO!

目录

二、DynamicHead框架介绍

官方论文地址: 官方论文地址点击即可跳转

官方代码地址: 官方代码地址点击即可跳转

2.1 DynamicHead的核心思想

这篇论文的核心思想是提出了一种名为“动态头”的新型检测头,用于统一尺度感知(scale-awareness)、空间感知(spatial-awareness)和任务感知(task-awareness)。文中将 目标检测 头的输入视为一个具有层级×空间×通道三个维度的3维张量。作者提出,这种统一的检测头可以被看作是一个注意力学习问题。理论上,可以在这个张量上构建一个完整的自注意力机制,但这样的优化问题难以解决,且计算成本过高。因此,他们选择在特征的每个特定维度上分别部署注意力机制

这篇论文的主要创新点可以分为以下几个方面:

1. 任务感知的注意力机制: 提出了一种在检测头中部署注意力的方法,这种注意力可以自适应地偏好于不同的任务,无论是单阶段/双阶段检测器,还是基于盒子/中心/关键点的检测器。

2. 尺度感知和空间感知: 研究提出了一个可以同时启用尺度感知、空间感知和任务感知的统一对象检测头。这意味着检测头能够处理在图像中共存的多种不同尺度的对象,同时考虑到对象通常在不同视角下呈现出不同的形状、旋转和位置。

3. 空间感知注意力: 在检测头中实现了一种空间感知的注意力,它不仅将注意力应用于每个空间位置,还能自适应地聚合多个特征层级,以学习更具辨别力的表达。

总结: 这篇论文的创新之处在于将尺度感知、空间感知和任务感知融合到一个统一的框架中,并在目标检测头中有效地应用注意力机制,提高了检测 性能 和效率。

2.2 DynamicHead的框架图

上图是论文中的图1其展示了动态头(Dynamic Head)方法的框架。这个方法包括三种不同的注意力机制,每种都专注于不同的方面:尺度感知注意力(Scale-aware Attention)、空间感知注意力(Spatial-aware Attention)和任务感知注意力(Task-aware Attention)。在图中,我们可以看到每种注意力模块后的特征图是如何改进的。

1. 尺度感知注意力:这个模块专注于特征层级(L),通过将特征金字塔缩放到相同的尺度来形成一个三维张量F∈RL×S×C,然后将其作为动态头的输入。

2. 空间感知注意力:接着,将包含尺度感知、空间感知和任务感知注意力的多个动态头块(Dy-Head blocks)依次堆叠起来。这个模块专注于空间位置(S),通过对特征进行聚合来关注图像中的区别性区域。

3. 任务感知注意力:最终,动态头的输出可以用于不同的任务和目标检测的表示,例如分类、中心/框回归等。这个模块专注于通道(C),动态地开启或关闭特征通道以支持不同的任务。

如图所示: 在初始的特征图中,由于领域差异,特征映射往往很嘈杂。应用动态头的三种注意力机制后,特征图变得更加清晰和专注。每一步注意力的应用都使得特征表示更加优化,从而为不同的目标检测任务提供了更好的基础。

2.3 DynamicHead的组成构建

上图提供了动态头(Dynamic Head)设计的详细信息,其中:

(a) 动态头块(DyHead Block) :

- 描述了每个注意力模块的详细实现。尺度感知注意力(πL),空间感知注意力(πS),和任务感知注意力(πC)分别对应着不同的模块,每个模块针对的是特征张量F的不同维度(层级L、空间S、通道C)。

- 尺度感知注意力模块使用了平均池化、1x1卷积、ReLU激活函数和硬Sigmoid函数。

- 空间感知注意力模块包括偏移量学习和3x3卷积。

- 任务感知注意力模块则通过全连接层、ReLU激活函数、以及归一化操作来处理。

网络上的改进将此部分进行了阉割,可以说是将最核心的地方进行了改动,所以效果只能说一般网络上的魔改版本,为什么要阉割是因为这个检测头在使用的时候需要注意一些细节,否则会一直报错。

(b)

应用于单阶段检测器

:

(b)

应用于单阶段检测器

:

- 展示了如何将多个动态头块应用于单阶段目标检测器,每个块的输出可用于分类、中心回归、框回归和关键点回归等不同任务。

(c)

应用于双阶段检测器

:

(c)

应用于双阶段检测器

:

- 展示了如何将动态头块应用于双阶段目标检测器。在这种情况下,首先在ROI池化层之前应用尺度感知和空间感知注意力,在ROI池化层之后使用任务感知注意力替换原有的全连接层。

整体来看,动态头的设计允许它灵活地适配于不同类型的目标检测器,无论是单阶段还是双阶段,都能通过添加不同类型的预测来提高性能。这种设计使得动态头不仅能够提高检测器的准确性,而且还能够适应不同的检测任务,显示出高度的灵活性和扩展性。

三、 DynamicHead的核心代码

DynamicHead的核心代码,使用方式看章节四,同时该检测头的yaml文件和其它的不一样大家也需要注意看章节五的yaml文件进行复制运行即可。

- import copy

- import math

- from mmcv.ops import ModulatedDeformConv2d

- from ultralytics.utils.tal import dist2bbox, make_anchors

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

-

- __all__ = ['DynamicHead']

-

- def _make_divisible(v, divisor, min_value=None):

- if min_value is None:

- min_value = divisor

- new_v = max(min_value, int(v + divisor / 2) // divisor * divisor)

- # Make sure that round down does not go down by more than 10%.

- if new_v < 0.9 * v:

- new_v += divisor

- return new_v

-

-

- class h_swish(nn.Module):

- def __init__(self, inplace=False):

- super(h_swish, self).__init__()

- self.inplace = inplace

-

- def forward(self, x):

- return x * F.relu6(x + 3.0, inplace=self.inplace) / 6.0

-

-

- class h_sigmoid(nn.Module):

- def __init__(self, inplace=True, h_max=1):

- super(h_sigmoid, self).__init__()

- self.relu = nn.ReLU6(inplace=inplace)

- self.h_max = h_max

-

- def forward(self, x):

- return self.relu(x + 3) * self.h_max / 6

-

-

- class DYReLU(nn.Module):

- def __init__(self, inp, oup, reduction=4, lambda_a=1.0, K2=True, use_bias=True, use_spatial=False,

- init_a=[1.0, 0.0], init_b=[0.0, 0.0]):

- super(DYReLU, self).__init__()

- self.oup = oup

- self.lambda_a = lambda_a * 2

- self.K2 = K2

- self.avg_pool = nn.AdaptiveAvgPool2d(1)

-

- self.use_bias = use_bias

- if K2:

- self.exp = 4 if use_bias else 2

- else:

- self.exp = 2 if use_bias else 1

- self.init_a = init_a

- self.init_b = init_b

-

- # determine squeeze

- if reduction == 4:

- squeeze = inp // reduction

- else:

- squeeze = _make_divisible(inp // reduction, 4)

- # print('reduction: {}, squeeze: {}/{}'.format(reduction, inp, squeeze))

- # print('init_a: {}, init_b: {}'.format(self.init_a, self.init_b))

-

- self.fc = nn.Sequential(

- nn.Linear(inp, squeeze),

- nn.ReLU(inplace=True),

- nn.Linear(squeeze, oup * self.exp),

- h_sigmoid()

- )

- if use_spatial:

- self.spa = nn.Sequential(

- nn.Conv2d(inp, 1, kernel_size=1),

- nn.BatchNorm2d(1),

- )

- else:

- self.spa = None

-

- def forward(self, x):

- if isinstance(x, list):

- x_in = x[0]

- x_out = x[1]

- else:

- x_in = x

- x_out = x

- b, c, h, w = x_in.size()

- y = self.avg_pool(x_in).view(b, c)

- y = self.fc(y).view(b, self.oup * self.exp, 1, 1)

- if self.exp == 4:

- a1, b1, a2, b2 = torch.split(y, self.oup, dim=1)

- a1 = (a1 - 0.5) * self.lambda_a + self.init_a[0] # 1.0

- a2 = (a2 - 0.5) * self.lambda_a + self.init_a[1]

-

- b1 = b1 - 0.5 + self.init_b[0]

- b2 = b2 - 0.5 + self.init_b[1]

- out = torch.max(x_out * a1 + b1, x_out * a2 + b2)

- elif self.exp == 2:

- if self.use_bias: # bias but not PL

- a1, b1 = torch.split(y, self.oup, dim=1)

- a1 = (a1 - 0.5) * self.lambda_a + self.init_a[0] # 1.0

- b1 = b1 - 0.5 + self.init_b[0]

- out = x_out * a1 + b1

-

- else:

- a1, a2 = torch.split(y, self.oup, dim=1)

- a1 = (a1 - 0.5) * self.lambda_a + self.init_a[0] # 1.0

- a2 = (a2 - 0.5) * self.lambda_a + self.init_a[1]

- out = torch.max(x_out * a1, x_out * a2)

-

- elif self.exp == 1:

- a1 = y

- a1 = (a1 - 0.5) * self.lambda_a + self.init_a[0] # 1.0

- out = x_out * a1

-

- if self.spa:

- ys = self.spa(x_in).view(b, -1)

- ys = F.softmax(ys, dim=1).view(b, 1, h, w) * h * w

- ys = F.hardtanh(ys, 0, 3, inplace=True) / 3

- out = out * ys

-

- return out

-

-

- class Conv3x3Norm(torch.nn.Module):

- def __init__(self, in_channels, out_channels, stride):

- super(Conv3x3Norm, self).__init__()

-

- self.conv = ModulatedDeformConv2d(in_channels, out_channels, kernel_size=3, stride=stride, padding=1)

- self.bn = nn.GroupNorm(num_groups=16, num_channels=out_channels)

-

- def forward(self, input, **kwargs):

- x = self.conv(input.contiguous(), **kwargs)

- x = self.bn(x)

- return x

-

-

- class DyConv(nn.Module):

- def __init__(self, in_channels=256, out_channels=256, conv_func=Conv3x3Norm):

- super(DyConv, self).__init__()

-

- self.DyConv = nn.ModuleList()

- self.DyConv.append(conv_func(in_channels, out_channels, 1))

- self.DyConv.append(conv_func(in_channels, out_channels, 1))

- self.DyConv.append(conv_func(in_channels, out_channels, 2))

-

- self.AttnConv = nn.Sequential(

- nn.AdaptiveAvgPool2d(1),

- nn.Conv2d(in_channels, 1, kernel_size=1),

- nn.ReLU(inplace=True))

-

- self.h_sigmoid = h_sigmoid()

- self.relu = DYReLU(in_channels, out_channels)

- self.offset = nn.Conv2d(in_channels, 27, kernel_size=3, stride=1, padding=1)

- self.init_weights()

-

- def init_weights(self):

- for m in self.DyConv.modules():

- if isinstance(m, nn.Conv2d):

- nn.init.normal_(m.weight.data, 0, 0.01)

- if m.bias is not None:

- m.bias.data.zero_()

- for m in self.AttnConv.modules():

- if isinstance(m, nn.Conv2d):

- nn.init.normal_(m.weight.data, 0, 0.01)

- if m.bias is not None:

- m.bias.data.zero_()

-

- def forward(self, x):

- next_x = {}

- feature_names = list(x.keys())

- for level, name in enumerate(feature_names):

-

- feature = x[name]

-

- offset_mask = self.offset(feature)

- offset = offset_mask[:, :18, :, :]

- mask = offset_mask[:, 18:, :, :].sigmoid()

- conv_args = dict(offset=offset, mask=mask)

-

- temp_fea = [self.DyConv[1](feature, **conv_args)]

- if level > 0:

- temp_fea.append(self.DyConv[2](x[feature_names[level - 1]], **conv_args))

- if level < len(x) - 1:

- input = x[feature_names[level + 1]]

- temp_fea.append(F.interpolate(self.DyConv[0](input, **conv_args),

- size=[feature.size(2), feature.size(3)]))

- attn_fea = []

- res_fea = []

- for fea in temp_fea:

- res_fea.append(fea)

- attn_fea.append(self.AttnConv(fea))

-

- res_fea = torch.stack(res_fea)

- spa_pyr_attn = self.h_sigmoid(torch.stack(attn_fea))

- mean_fea = torch.mean(res_fea * spa_pyr_attn, dim=0, keepdim=False)

- next_x[name] = self.relu(mean_fea)

-

- return next_x

-

-

- def autopad(k, p=None, d=1): # kernel, padding, dilation

- """Pad to 'same' shape outputs."""

- if d > 1:

- k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

- if p is None:

- p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

- return p

-

-

- class Conv(nn.Module):

- """Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)."""

- default_act = nn.SiLU() # default activation

-

- def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

- """Initialize Conv layer with given arguments including activation."""

- super().__init__()

- self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

- self.bn = nn.BatchNorm2d(c2)

- self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

-

- def forward(self, x):

- """Apply convolution, batch normalization and activation to input tensor."""

- return self.act(self.bn(self.conv(x)))

-

- def forward_fuse(self, x):

- """Perform transposed convolution of 2D data."""

- return self.act(self.conv(x))

-

- class DFL(nn.Module):

- """

- Integral module of Distribution Focal Loss (DFL).

- Proposed in Generalized Focal Loss https://ieeexplore.ieee.org/document/9792391

- """

-

- def __init__(self, c1=16):

- """Initialize a convolutional layer with a given number of input channels."""

- super().__init__()

- self.conv = nn.Conv2d(c1, 1, 1, bias=False).requires_grad_(False)

- x = torch.arange(c1, dtype=torch.float)

- self.conv.weight.data[:] = nn.Parameter(x.view(1, c1, 1, 1))

- self.c1 = c1

-

- def forward(self, x):

- """Applies a transformer layer on input tensor 'x' and returns a tensor."""

- b, c, a = x.shape # batch, channels, anchors

- return self.conv(x.view(b, 4, self.c1, a).transpose(2, 1).softmax(1)).view(b, 4, a)

- # return self.conv(x.view(b, self.c1, 4, a).softmax(1)).view(b, 4, a)

-

-

-

- class DWConv(Conv):

- """Depth-wise convolution."""

-

- def __init__(self, c1, c2, k=1, s=1, d=1, act=True): # ch_in, ch_out, kernel, stride, dilation, activation

- """Initialize Depth-wise convolution with given parameters."""

- super().__init__(c1, c2, k, s, g=math.gcd(c1, c2), d=d, act=act)

-

-

-

- class DynamicHead(nn.Module):

- """YOLOv8 Detect head for detection models. CSDNSnu77"""

-

- dynamic = False # force grid reconstruction

- export = False # export mode

- end2end = False # end2end

- max_det = 300 # max_det

- shape = None

- anchors = torch.empty(0) # init

- strides = torch.empty(0) # init

-

- def __init__(self, nc=80, ch=()):

- """Initializes the YOLOv8 detection layer with specified number of classes and channels."""

- super().__init__()

- self.nc = nc # number of classes

- self.nl = len(ch) # number of detection layers

- self.reg_max = 16 # DFL channels (ch[0] // 16 to scale 4/8/12/16/20 for n/s/m/l/x)

- self.no = nc + self.reg_max * 4 # number of outputs per anchor

- self.stride = torch.zeros(self.nl) # strides computed during build

- c2, c3 = max((16, ch[0] // 4, self.reg_max * 4)), max(ch[0], min(self.nc, 100)) # channels

- self.cv2 = nn.ModuleList(

- nn.Sequential(Conv(x, c2, 3), Conv(c2, c2, 3), nn.Conv2d(c2, 4 * self.reg_max, 1)) for x in ch

- )

- self.cv3 = nn.ModuleList(

- nn.Sequential(

- nn.Sequential(DWConv(x, x, 3), Conv(x, c3, 1)),

- nn.Sequential(DWConv(c3, c3, 3), Conv(c3, c3, 1)),

- nn.Conv2d(c3, self.nc, 1),

- )

- for x in ch

- )

- self.dfl = DFL(self.reg_max) if self.reg_max > 1 else nn.Identity()

-

- dyhead_tower = []

- for i in range(self.nl):

- channel = ch[i]

- dyhead_tower.append(

- DyConv(

- channel,

- channel,

- conv_func=Conv3x3Norm,

- )

- )

- self.add_module('dyhead_tower', nn.Sequential(*dyhead_tower))

-

-

- if self.end2end:

- self.one2one_cv2 = copy.deepcopy(self.cv2)

- self.one2one_cv3 = copy.deepcopy(self.cv3)

-

- def forward(self, x):

- tensor_dict = {i: tensor for i, tensor in enumerate(x)}

- x = self.dyhead_tower(tensor_dict)

- x = list(x.values())

- """Concatenates and returns predicted bounding boxes and class probabilities."""

- if self.end2end:

- return self.forward_end2end(x)

- for i in range(self.nl):

- x[i] = torch.cat((self.cv2[i](x[i]), self.cv3[i](x[i])), 1)

- if self.training: # Training path

- return x

- y = self._inference(x)

- return y if self.export else (y, x)

-

- def forward_end2end(self, x):

- """

- Performs forward pass of the v10Detect module.

- Args:

- x (tensor): Input tensor.

- Returns:

- (dict, tensor): If not in training mode, returns a dictionary containing the outputs of both one2many and one2one detections.

- If in training mode, returns a dictionary containing the outputs of one2many and one2one detections separately.

- """

- x_detach = [xi.detach() for xi in x]

- one2one = [

- torch.cat((self.one2one_cv2[i](x_detach[i]), self.one2one_cv3[i](x_detach[i])), 1) for i in range(self.nl)

- ]

- for i in range(self.nl):

- x[i] = torch.cat((self.cv2[i](x[i]), self.cv3[i](x[i])), 1)

- if self.training: # Training path

- return {"one2many": x, "one2one": one2one}

-

- y = self._inference(one2one)

- y = self.postprocess(y.permute(0, 2, 1), self.max_det, self.nc)

- return y if self.export else (y, {"one2many": x, "one2one": one2one})

-

- def _inference(self, x):

- """Decode predicted bounding boxes and class probabilities based on multiple-level feature maps."""

- # Inference path

- shape = x[0].shape # BCHW

- x_cat = torch.cat([xi.view(shape[0], self.no, -1) for xi in x], 2)

- if self.dynamic or self.shape != shape:

- self.anchors, self.strides = (x.transpose(0, 1) for x in make_anchors(x, self.stride, 0.5))

- self.shape = shape

-

- if self.export and self.format in {"saved_model", "pb", "tflite", "edgetpu", "tfjs"}: # avoid TF FlexSplitV ops

- box = x_cat[:, : self.reg_max * 4]

- cls = x_cat[:, self.reg_max * 4 :]

- else:

- box, cls = x_cat.split((self.reg_max * 4, self.nc), 1)

-

- if self.export and self.format in {"tflite", "edgetpu"}:

- # Precompute normalization factor to increase numerical stability

- # See https://github.com/ultralytics/ultralytics/issues/7371

- grid_h = shape[2]

- grid_w = shape[3]

- grid_size = torch.tensor([grid_w, grid_h, grid_w, grid_h], device=box.device).reshape(1, 4, 1)

- norm = self.strides / (self.stride[0] * grid_size)

- dbox = self.decode_bboxes(self.dfl(box) * norm, self.anchors.unsqueeze(0) * norm[:, :2])

- else:

- dbox = self.decode_bboxes(self.dfl(box), self.anchors.unsqueeze(0)) * self.strides

-

- return torch.cat((dbox, cls.sigmoid()), 1)

-

- def bias_init(self):

- """Initialize Detect() biases, WARNING: requires stride availability."""

- m = self # self.model[-1] # Detect() module

- # cf = torch.bincount(torch.tensor(np.concatenate(dataset.labels, 0)[:, 0]).long(), minlength=nc) + 1

- # ncf = math.log(0.6 / (m.nc - 0.999999)) if cf is None else torch.log(cf / cf.sum()) # nominal class frequency

- for a, b, s in zip(m.cv2, m.cv3, m.stride): # from

- a[-1].bias.data[:] = 1.0 # box

- b[-1].bias.data[: m.nc] = math.log(5 / m.nc / (640 / s) ** 2) # cls (.01 objects, 80 classes, 640 img)

- if self.end2end:

- for a, b, s in zip(m.one2one_cv2, m.one2one_cv3, m.stride): # from

- a[-1].bias.data[:] = 1.0 # box

- b[-1].bias.data[: m.nc] = math.log(5 / m.nc / (640 / s) ** 2) # cls (.01 objects, 80 classes, 640 img)

-

- def decode_bboxes(self, bboxes, anchors):

- """Decode bounding boxes."""

- return dist2bbox(bboxes, anchors, xywh=not self.end2end, dim=1)

-

- @staticmethod

- def postprocess(preds: torch.Tensor, max_det: int, nc: int = 80):

- """

- Post-processes YOLO model predictions.

- Args:

- preds (torch.Tensor): Raw predictions with shape (batch_size, num_anchors, 4 + nc) with last dimension

- format [x, y, w, h, class_probs].

- max_det (int): Maximum detections per image.

- nc (int, optional): Number of classes. Default: 80.

- Returns:

- (torch.Tensor): Processed predictions with shape (batch_size, min(max_det, num_anchors), 6) and last

- dimension format [x, y, w, h, max_class_prob, class_index].

- """

- batch_size, anchors, _ = preds.shape # i.e. shape(16,8400,84)

- boxes, scores = preds.split([4, nc], dim=-1)

- index = scores.amax(dim=-1).topk(min(max_det, anchors))[1].unsqueeze(-1)

- boxes = boxes.gather(dim=1, index=index.repeat(1, 1, 4))

- scores = scores.gather(dim=1, index=index.repeat(1, 1, nc))

- scores, index = scores.flatten(1).topk(min(max_det, anchors))

- i = torch.arange(batch_size)[..., None] # batch indices

- return torch.cat([boxes[i, index // nc], scores[..., None], (index % nc)[..., None].float()], dim=-1)

-

-

-

- if __name__ == "__main__":

- # Generating Sample image CSDN Snu77

- image1 = (1, 64, 32, 32)

- image2 = (1, 64, 16, 16)

- image3 = (1, 64, 8, 8)

-

- image1 = torch.rand(image1)

- image2 = torch.rand(image2)

- image3 = torch.rand(image3)

- image = [image1, image2, image3]

- channel = (64, 64, 64)

- # Model

- mobilenet_v1 = DynamicHead(nc=80, ch=channel) # 尺度统一检测头.

-

- out = mobilenet_v1(image)

- print(out)

四、手把手教你添加DynamicHead检测头

4.1 修改一

首先我们将上面的代码复制粘贴到' ultralytics /nn' 目录下新建一个py文件复制粘贴进去,具体名字自己来定,我这里起名为DynamicHead.py。

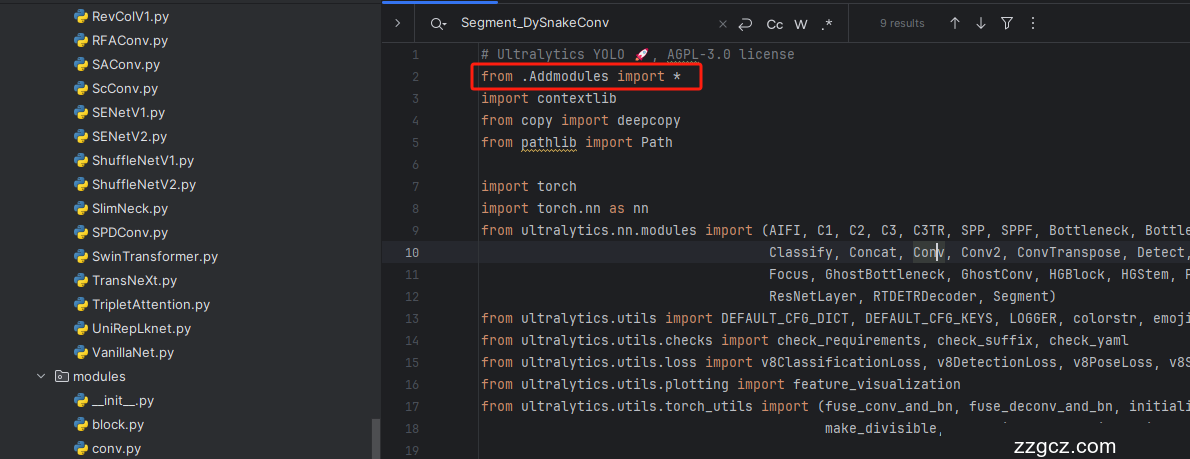

4.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( ,然后在其内部导入我们的检测头如下图所示。

4.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( !

4.4 修改四

第四步我门找到如下文件'ultralytics/nn/tasks.py,找到如下的代码进行将检测头添加进去,这里给大家推荐个快速搜索的方法用ctrl+f然后搜索Detect然后就能快速查找了。

4.5 修改五

4.6 修改六

同理

4.7 修改七

这里有一些不一样,我们需要加一行代码

- else:

- return 'detect'

为啥呢不一样,因为这里的m在代码执行过程中会将你的代码自动转换为小写,所以直接else方便一点,以后出现一些其它分割或者其它的教程的时候在提供其它的修改教程。

4.8 修改八

同理.

到此就修改完成了,大家可以复制下面的yaml文件运行。

五、DynamicHead检测头的yaml文件

此版本训练信息:YOLO11-DynamicHead summary: 399 layers, 2,271,959 parameters, 2,271,943 gradients, 6.2 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

-

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

-

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

-

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 6], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2, [256, False]] # 13

-

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 4], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

-

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 13], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2, [256, False]] # 19 (P4/16-medium)

-

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 10], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2, [256, True]] # 22 (P5/32-large)

-

- - [[16, 19, 22], 1, DynamicHead, [nc]] # Detect(P3, P4, P5) # 尺度统一动态检测头.

六、完美运行记录

最后提供一下完美运行的图片。

七、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~