一、本文介绍

本文带来的改进机制是YOLOv11模型与 多元分支模块(Diverse Branch Block) 的结合,Diverse Branch Block (DBB) 是一种用于 增强卷积神经网络性能的结构重新参数化技术 。这种技术的核心在于结合多样化的分支,这些分支具有 不同的 尺度和复杂度,从而丰富特征空间。 我将其放在了YOLOv11的不同位置上均有一定的涨点幅度 ,同时这个DBB模块的参数量并不会上涨太多,我添加三个该机制到模型中, GFLOPs上涨了0.04,本文内容含独家二次创新C3k2机制。

目录

四、手把手教你添加Diverse Branch Block机制

4.2 Diverse Branch Block的yaml文件和训练截图

4.2.1 Diverse Branch Block的yaml版本一(推荐)

4.2.2 Diverse Branch Block的yaml版本二

4.2.3Diverse Branch Block的yaml版本三

4.2.2 Diverse Branch Block的训练过程截图

二、Diverse Branch Block原理

论文地址: 论文官方地址

代码地址: 官方代码地址

2.1 Diverse Branch Block的基本原理

Diverse Branch Block(DBB)的基本原理是在训练阶段增加卷积层的复杂性,通过 引入不同尺寸和结构的卷积分支 来丰富网络的特征表示能力。我们可以将基本原理可以概括为以下几点:

1. 多样化分支结构:

DBB 结合了不同尺度和复杂度的分支,如不同大小的卷积核和平均池化,以增加单个卷积的特征表达能力。

2. 训练与推理分离:

在训练阶段,DBB 采用复杂的分支结构,而在推理阶段,这些分支可以被等效地转换为单个卷积层,以保持高效推理。

3. 宏观架构不变:

DBB 允许在不改变整体网络架构的情况下,作为常规卷积层的替代品插入到现有网络中。

下面我将为大家展示Diverse Branch Block(DBB)的 设计示例 :

在训练时(左侧),DBB由不同大小的卷积层和平均池化层组成,这些层以一种复杂的方式 并行 排列,并最终合并输出。训练完成后,这些复杂的结构会 转换成单个卷积层 ,用于模型的推理阶段(右侧),以此保持推理时的效率。这种转换允许DBB在 保持宏观架构不变 的同时,增加训练时的微观结构复杂性。

2.2 多样化分支结构

多样化分支结构是在 卷积神经网络 中引入的一种结构,旨在通过 多样化的分支来增强模型的特征提取能力 。这些分支包含不同尺寸的卷积层和池化层,以及其他潜在的操作,它们并行工作以捕获不同的特征表示。在训练完成后,这些复杂的结构可以合并并简化为单个的卷积层,以便在推理时不增加额外的计算负担。这种设计使得DBB可以作为现有卷积层的 直接替换 ,增强了现有网络架构的性能,而 不需要修改整体架构 。

下面我详细展示了如何通过六种转换方法将训练时的Diverse Branch Block(DBB)转换为推理时的常规卷积层,每一种转换对应于一种特定的操作:

1. Transform I:

将具有批量规范化(batch norm)的卷积层融合。

2. Transform II:

合并具有相同配置的卷积层的输出。

3. Transform III:

合并序列卷积层。

4. Transform IV:

通过深度串联(concat)来合并卷积层。

5. Transform V:

将平均池化(AVG)操作融入卷积操作中。

6. Transform VI:

结合不同尺度的卷积层。

可以看到右侧的框显示了经过这些转换后,可以实现的推理时DBB,其中包含了常规卷积、平均池化和批量规范化操作。这些转换确保了在不增加推理时负担的同时,能够在训练时利用DBB的多样化特征提取能力。

2.3 训练与推理分离

训练与推理分离的概念是指在模型 训练阶段使用复杂的DBB结构 , 而在 模型推理阶段则转换为简化的卷积结构 。这种设计允许模型在训练时利用DBB的多样性来增强特征提取和学习能力,而在实际应用中,即推理时,通过减少计算量来保持高效。这样,模型在保持高性能的同时,也保证了运行速度和资源效率。

上面我将展示在训练阶段如何通过不同的卷积组合(如图中的1x1和KxK卷积),以及在推理阶段如何将这些组合转换成一个简化的结构(如图中的转换IV所示的拼接操作):

经过分析,我们可以发现它说明了 三种不同的情况 :

A)组卷积(Groupwise conv):将输入分成多个组,每个组使用不同的卷积核。

B)训练时的1x1-KxK结构:首先应用1x1的卷积(减少特征维度),然后是分组的KxK卷积。

C)从转换IV的角度看:这是将多个分组的卷积输出合并的视角。这里,组内卷积后的特征图先分别通过1x1卷积处理,然后再进行拼接(concat)。

2.4 宏观架构不变

宏观架构不变指的是DBB在设计时 考虑到了与现有的网络架构兼容性 ,确保可以在不改变整体网络架构(如ResNet等流行架构)的前提下,将DBB作为一个模块嵌入。这意味着DBB增强了网络的特征提取能力,同时保持了原有网络结构的布局,确保了推理时的效率和性能。这样的设计允许研究者和开发者将DBB直接应用到现有的 深度学习 模型中,而无需进行大规模的架构调整。

三、Diverse Branch Block的核心代码

- import torch

- import torch.nn as nn

- import torch.nn.functional as F

- import numpy as np

-

-

- __all__ = ['DiverseBranchBlock', 'C3k2_DBB_backbone', 'C3k2_DBB_neck']

-

- def autopad(k, p=None, d=1): # kernel, padding, dilation

- """Pad to 'same' shape outputs."""

- if d > 1:

- k = d * (k - 1) + 1 if isinstance(k, int) else [d * (x - 1) + 1 for x in k] # actual kernel-size

- if p is None:

- p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

- return p

-

-

- class Conv(nn.Module):

- """Standard convolution with args(ch_in, ch_out, kernel, stride, padding, groups, dilation, activation)."""

- default_act = nn.SiLU() # default activation

-

- def __init__(self, c1, c2, k=1, s=1, p=None, g=1, d=1, act=True):

- """Initialize Conv layer with given arguments including activation."""

- super().__init__()

- self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p, d), groups=g, dilation=d, bias=False)

- self.bn = nn.BatchNorm2d(c2)

- self.act = self.default_act if act is True else act if isinstance(act, nn.Module) else nn.Identity()

-

- def forward(self, x):

- """Apply convolution, batch normalization and activation to input tensor."""

- return self.act(self.bn(self.conv(x)))

-

- def forward_fuse(self, x):

- """Perform transposed convolution of 2D data."""

- return self.act(self.conv(x))

-

- def transI_fusebn(kernel, bn):

- gamma = bn.weight

- std = (bn.running_var + bn.eps).sqrt()

- return kernel * ((gamma / std).reshape(-1, 1, 1, 1)), bn.bias - bn.running_mean * gamma / std

-

-

- def transII_addbranch(kernels, biases):

- return sum(kernels), sum(biases)

-

-

- def transIII_1x1_kxk(k1, b1, k2, b2, groups):

- if groups == 1:

- k = F.conv2d(k2, k1.permute(1, 0, 2, 3)) #

- b_hat = (k2 * b1.reshape(1, -1, 1, 1)).sum((1, 2, 3))

- else:

- k_slices = []

- b_slices = []

- k1_T = k1.permute(1, 0, 2, 3)

- k1_group_width = k1.size(0) // groups

- k2_group_width = k2.size(0) // groups

- for g in range(groups):

- k1_T_slice = k1_T[:, g * k1_group_width:(g + 1) * k1_group_width, :, :]

- k2_slice = k2[g * k2_group_width:(g + 1) * k2_group_width, :, :, :]

- k_slices.append(F.conv2d(k2_slice, k1_T_slice))

- b_slices.append(

- (k2_slice * b1[g * k1_group_width:(g + 1) * k1_group_width].reshape(1, -1, 1, 1)).sum((1, 2, 3)))

- k, b_hat = transIV_depthconcat(k_slices, b_slices)

- return k, b_hat + b2

-

-

- def transIV_depthconcat(kernels, biases):

- return torch.cat(kernels, dim=0), torch.cat(biases)

-

-

- def transV_avg(channels, kernel_size, groups):

- input_dim = channels // groups

- k = torch.zeros((channels, input_dim, kernel_size, kernel_size))

- k[np.arange(channels), np.tile(np.arange(input_dim), groups), :, :] = 1.0 / kernel_size ** 2

- return k

-

-

- # This has not been tested with non-square kernels (kernel.size(2) != kernel.size(3)) nor even-size kernels

- def transVI_multiscale(kernel, target_kernel_size):

- H_pixels_to_pad = (target_kernel_size - kernel.size(2)) // 2

- W_pixels_to_pad = (target_kernel_size - kernel.size(3)) // 2

- return F.pad(kernel, [H_pixels_to_pad, H_pixels_to_pad, W_pixels_to_pad, W_pixels_to_pad])

-

-

- def conv_bn(in_channels, out_channels, kernel_size, stride=1, padding=0, dilation=1, groups=1,

- padding_mode='zeros'):

- conv_layer = nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,

- stride=stride, padding=padding, dilation=dilation, groups=groups,

- bias=False, padding_mode=padding_mode)

- bn_layer = nn.BatchNorm2d(num_features=out_channels, affine=True)

- se = nn.Sequential()

- se.add_module('conv', conv_layer)

- se.add_module('bn', bn_layer)

- return se

-

-

- class IdentityBasedConv1x1(nn.Conv2d):

- def __init__(self, channels, groups=1):

- super(IdentityBasedConv1x1, self).__init__(in_channels=channels, out_channels=channels, kernel_size=1, stride=1,

- padding=0, groups=groups, bias=False)

-

- assert channels % groups == 0

- input_dim = channels // groups

- id_value = np.zeros((channels, input_dim, 1, 1))

- for i in range(channels):

- id_value[i, i % input_dim, 0, 0] = 1

- self.id_tensor = torch.from_numpy(id_value).type_as(self.weight)

- nn.init.zeros_(self.weight)

-

- def forward(self, input):

- kernel = self.weight + self.id_tensor.to(self.weight.device).type_as(self.weight)

- result = F.conv2d(input, kernel, None, stride=1, padding=0, dilation=self.dilation, groups=self.groups)

- return result

-

- def get_actual_kernel(self):

- return self.weight + self.id_tensor.to(self.weight.device)

-

-

- class BNAndPadLayer(nn.Module):

- def __init__(self,

- pad_pixels,

- num_features,

- eps=1e-5,

- momentum=0.1,

- affine=True,

- track_running_stats=True):

- super(BNAndPadLayer, self).__init__()

- self.bn = nn.BatchNorm2d(num_features, eps, momentum, affine, track_running_stats)

- self.pad_pixels = pad_pixels

-

- def forward(self, input):

- output = self.bn(input)

- if self.pad_pixels > 0:

- if self.bn.affine:

- pad_values = self.bn.bias.detach() - self.bn.running_mean * self.bn.weight.detach() / torch.sqrt(

- self.bn.running_var + self.bn.eps)

- else:

- pad_values = - self.bn.running_mean / torch.sqrt(self.bn.running_var + self.bn.eps)

- output = F.pad(output, [self.pad_pixels] * 4)

- pad_values = pad_values.view(1, -1, 1, 1)

- output[:, :, 0:self.pad_pixels, :] = pad_values

- output[:, :, -self.pad_pixels:, :] = pad_values

- output[:, :, :, 0:self.pad_pixels] = pad_values

- output[:, :, :, -self.pad_pixels:] = pad_values

- return output

-

- @property

- def weight(self):

- return self.bn.weight

-

- @property

- def bias(self):

- return self.bn.bias

-

- @property

- def running_mean(self):

- return self.bn.running_mean

-

- @property

- def running_var(self):

- return self.bn.running_var

-

- @property

- def eps(self):

- return self.bn.eps

-

-

- class DiverseBranchBlock(nn.Module):

- def __init__(self, in_channels, out_channels, kernel_size,

- stride=1, padding=None, dilation=1, groups=1,

- internal_channels_1x1_3x3=None,

- deploy=False, single_init=False):

- super(DiverseBranchBlock, self).__init__()

- self.deploy = deploy

-

- self.nonlinear = Conv.default_act

-

- self.kernel_size = kernel_size

- self.out_channels = out_channels

- self.groups = groups

-

- if padding is None:

- padding = autopad(kernel_size, padding, dilation)

- assert padding == kernel_size // 2

-

- if deploy:

- self.dbb_reparam = nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,

- stride=stride,

- padding=padding, dilation=dilation, groups=groups, bias=True)

-

- else:

-

- self.dbb_origin = conv_bn(in_channels=in_channels, out_channels=out_channels, kernel_size=kernel_size,

- stride=stride, padding=padding, dilation=dilation, groups=groups)

-

- self.dbb_avg = nn.Sequential()

- if groups < out_channels:

- self.dbb_avg.add_module('conv',

- nn.Conv2d(in_channels=in_channels, out_channels=out_channels, kernel_size=1,

- stride=1, padding=0, groups=groups, bias=False))

- self.dbb_avg.add_module('bn', BNAndPadLayer(pad_pixels=padding, num_features=out_channels))

- self.dbb_avg.add_module('avg', nn.AvgPool2d(kernel_size=kernel_size, stride=stride, padding=0))

- self.dbb_1x1 = conv_bn(in_channels=in_channels, out_channels=out_channels, kernel_size=1, stride=stride,

- padding=0, groups=groups)

- else:

- self.dbb_avg.add_module('avg', nn.AvgPool2d(kernel_size=kernel_size, stride=stride, padding=padding))

-

- self.dbb_avg.add_module('avgbn', nn.BatchNorm2d(out_channels))

-

- if internal_channels_1x1_3x3 is None:

- internal_channels_1x1_3x3 = in_channels if groups < out_channels else 2 * in_channels # For mobilenet, it is better to have 2X internal channels

-

- self.dbb_1x1_kxk = nn.Sequential()

- if internal_channels_1x1_3x3 == in_channels:

- self.dbb_1x1_kxk.add_module('idconv1', IdentityBasedConv1x1(channels=in_channels, groups=groups))

- else:

- self.dbb_1x1_kxk.add_module('conv1',

- nn.Conv2d(in_channels=in_channels, out_channels=internal_channels_1x1_3x3,

- kernel_size=1, stride=1, padding=0, groups=groups, bias=False))

- self.dbb_1x1_kxk.add_module('bn1', BNAndPadLayer(pad_pixels=padding, num_features=internal_channels_1x1_3x3,

- affine=True))

- self.dbb_1x1_kxk.add_module('conv2',

- nn.Conv2d(in_channels=internal_channels_1x1_3x3, out_channels=out_channels,

- kernel_size=kernel_size, stride=stride, padding=0, groups=groups,

- bias=False))

- self.dbb_1x1_kxk.add_module('bn2', nn.BatchNorm2d(out_channels))

-

- # The experiments reported in the paper used the default initialization of bn.weight (all as 1). But changing the initialization may be useful in some cases.

- if single_init:

- # Initialize the bn.weight of dbb_origin as 1 and others as 0. This is not the default setting.

- self.single_init()

-

- def get_equivalent_kernel_bias(self):

- k_origin, b_origin = transI_fusebn(self.dbb_origin.conv.weight, self.dbb_origin.bn)

-

- if hasattr(self, 'dbb_1x1'):

- k_1x1, b_1x1 = transI_fusebn(self.dbb_1x1.conv.weight, self.dbb_1x1.bn)

- k_1x1 = transVI_multiscale(k_1x1, self.kernel_size)

- else:

- k_1x1, b_1x1 = 0, 0

-

- if hasattr(self.dbb_1x1_kxk, 'idconv1'):

- k_1x1_kxk_first = self.dbb_1x1_kxk.idconv1.get_actual_kernel()

- else:

- k_1x1_kxk_first = self.dbb_1x1_kxk.conv1.weight

- k_1x1_kxk_first, b_1x1_kxk_first = transI_fusebn(k_1x1_kxk_first, self.dbb_1x1_kxk.bn1)

- k_1x1_kxk_second, b_1x1_kxk_second = transI_fusebn(self.dbb_1x1_kxk.conv2.weight, self.dbb_1x1_kxk.bn2)

- k_1x1_kxk_merged, b_1x1_kxk_merged = transIII_1x1_kxk(k_1x1_kxk_first, b_1x1_kxk_first, k_1x1_kxk_second,

- b_1x1_kxk_second, groups=self.groups)

-

- k_avg = transV_avg(self.out_channels, self.kernel_size, self.groups)

- k_1x1_avg_second, b_1x1_avg_second = transI_fusebn(k_avg.to(self.dbb_avg.avgbn.weight.device),

- self.dbb_avg.avgbn)

- if hasattr(self.dbb_avg, 'conv'):

- k_1x1_avg_first, b_1x1_avg_first = transI_fusebn(self.dbb_avg.conv.weight, self.dbb_avg.bn)

- k_1x1_avg_merged, b_1x1_avg_merged = transIII_1x1_kxk(k_1x1_avg_first, b_1x1_avg_first, k_1x1_avg_second,

- b_1x1_avg_second, groups=self.groups)

- else:

- k_1x1_avg_merged, b_1x1_avg_merged = k_1x1_avg_second, b_1x1_avg_second

-

- return transII_addbranch((k_origin, k_1x1, k_1x1_kxk_merged, k_1x1_avg_merged),

- (b_origin, b_1x1, b_1x1_kxk_merged, b_1x1_avg_merged))

-

- def switch_to_deploy(self):

- if hasattr(self, 'dbb_reparam'):

- return

- kernel, bias = self.get_equivalent_kernel_bias()

- self.dbb_reparam = nn.Conv2d(in_channels=self.dbb_origin.conv.in_channels,

- out_channels=self.dbb_origin.conv.out_channels,

- kernel_size=self.dbb_origin.conv.kernel_size, stride=self.dbb_origin.conv.stride,

- padding=self.dbb_origin.conv.padding, dilation=self.dbb_origin.conv.dilation,

- groups=self.dbb_origin.conv.groups, bias=True)

- self.dbb_reparam.weight.data = kernel

- self.dbb_reparam.bias.data = bias

- for para in self.parameters():

- para.detach_()

- self.__delattr__('dbb_origin')

- self.__delattr__('dbb_avg')

- if hasattr(self, 'dbb_1x1'):

- self.__delattr__('dbb_1x1')

- self.__delattr__('dbb_1x1_kxk')

-

- def forward(self, inputs):

- if hasattr(self, 'dbb_reparam'):

- return self.nonlinear(self.dbb_reparam(inputs))

-

- out = self.dbb_origin(inputs)

- if hasattr(self, 'dbb_1x1'):

- out += self.dbb_1x1(inputs)

- out += self.dbb_avg(inputs)

- out += self.dbb_1x1_kxk(inputs)

- return self.nonlinear(out)

-

- def init_gamma(self, gamma_value):

- if hasattr(self, "dbb_origin"):

- torch.nn.init.constant_(self.dbb_origin.bn.weight, gamma_value)

- if hasattr(self, "dbb_1x1"):

- torch.nn.init.constant_(self.dbb_1x1.bn.weight, gamma_value)

- if hasattr(self, "dbb_avg"):

- torch.nn.init.constant_(self.dbb_avg.avgbn.weight, gamma_value)

- if hasattr(self, "dbb_1x1_kxk"):

- torch.nn.init.constant_(self.dbb_1x1_kxk.bn2.weight, gamma_value)

-

- def single_init(self):

- self.init_gamma(0.0)

- if hasattr(self, "dbb_origin"):

- torch.nn.init.constant_(self.dbb_origin.bn.weight, 1.0)

-

-

-

- class Bottleneck_DBB(nn.Module):

- # Standard bottleneck with DCN

- def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5): # ch_in, ch_out, shortcut, groups, kernels, expand

- super().__init__()

- c_ = int(c2 * e) # hidden channels

-

- self.cv1 = Conv(c1, c_, k[0], 1)

- self.cv2 = DiverseBranchBlock(c_, c2, 3, stride=1, groups=g)

- self.add = shortcut and c1 == c2

-

- def forward(self, x):

- return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

-

-

- class Bottleneck(nn.Module):

- """Standard bottleneck."""

-

- def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):

- """Initializes a standard bottleneck module with optional shortcut connection and configurable parameters."""

- super().__init__()

- c_ = int(c2 * e) # hidden channels

- self.cv1 = Conv(c1, c_, k[0], 1)

- self.cv2 = Conv(c_, c2, k[1], 1, g=g)

- self.add = shortcut and c1 == c2

-

- def forward(self, x):

- """Applies the YOLO FPN to input data."""

- return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

-

- class C2f(nn.Module):

- """Faster Implementation of CSP Bottleneck with 2 convolutions."""

-

- def __init__(self, c1, c2, n=1, shortcut=False, g=1, e=0.5):

- """Initializes a CSP bottleneck with 2 convolutions and n Bottleneck blocks for faster processing."""

- super().__init__()

- self.c = int(c2 * e) # hidden channels

- self.cv1 = Conv(c1, 2 * self.c, 1, 1)

- self.cv2 = Conv((2 + n) * self.c, c2, 1) # optional act=FReLU(c2)

- self.m = nn.ModuleList(Bottleneck(self.c, self.c, shortcut, g, k=((3, 3), (3, 3)), e=1.0) for _ in range(n))

-

- def forward(self, x):

- """Forward pass through C2f layer."""

- y = list(self.cv1(x).chunk(2, 1))

- y.extend(m(y[-1]) for m in self.m)

- return self.cv2(torch.cat(y, 1))

-

- def forward_split(self, x):

- """Forward pass using split() instead of chunk()."""

- y = list(self.cv1(x).split((self.c, self.c), 1))

- y.extend(m(y[-1]) for m in self.m)

- return self.cv2(torch.cat(y, 1))

-

- class C3(nn.Module):

- """CSP Bottleneck with 3 convolutions."""

-

- def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5):

- """Initialize the CSP Bottleneck with given channels, number, shortcut, groups, and expansion values."""

- super().__init__()

- c_ = int(c2 * e) # hidden channels

- self.cv1 = Conv(c1, c_, 1, 1)

- self.cv2 = Conv(c1, c_, 1, 1)

- self.cv3 = Conv(2 * c_, c2, 1) # optional act=FReLU(c2)

- self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, k=((1, 1), (3, 3)), e=1.0) for _ in range(n)))

-

- def forward(self, x):

- """Forward pass through the CSP bottleneck with 2 convolutions."""

- return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1))

-

- class C3k(C3):

- """C3k is a CSP bottleneck module with customizable kernel sizes for feature extraction in neural networks."""

-

- def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5, k=3):

- """Initializes the C3k module with specified channels, number of layers, and configurations."""

- super().__init__(c1, c2, n, shortcut, g, e)

- c_ = int(c2 * e) # hidden channels

- # self.m = nn.Sequential(*(RepBottleneck(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))

- self.m = nn.Sequential(*(Bottleneck_DBB(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))

-

- class C3k2_DBB_backbone(C2f):

- """Faster Implementation of CSP Bottleneck with 2 convolutions."""

-

- def __init__(self, c1, c2, n=1, c3k=False, e=0.5, g=1, shortcut=True):

- """Initializes the C3k2 module, a faster CSP Bottleneck with 2 convolutions and optional C3k blocks."""

- super().__init__(c1, c2, n, shortcut, g, e)

- self.m = nn.ModuleList(

- C3k(self.c, self.c, 2, shortcut, g) if c3k else Bottleneck_DBB(self.c, self.c, shortcut, g) for _ in range(n)

- )

-

-

- class C3k2_DBB_neck(C2f):

- """Faster Implementation of CSP Bottleneck with 2 convolutions."""

-

- def __init__(self, c1, c2, n=1, c3k=False, e=0.5, g=1, shortcut=True):

- """Initializes the C3k2 module, a faster CSP Bottleneck with 2 convolutions and optional C3k blocks."""

- super().__init__(c1, c2, n, shortcut, g, e)

- self.m = nn.ModuleList(

- C3k(self.c, self.c, 2, shortcut, g) if c3k else Bottleneck(self.c, self.c, shortcut, g) for _ in range(n)

- )

-

-

-

-

-

- if __name__ == "__main__":

- # Generating Sample image

- image_size = (1, 64, 224, 224)

- image = torch.rand(*image_size)

-

- # Model

- model = C3k2_DBB_backbone(64, 64)

-

- out = model(image)

- print(out.size())

四、手把手教你添加Diverse Branch Block机制

4.1 Diverse Branch Block的添加教程

4.1.1 修改一

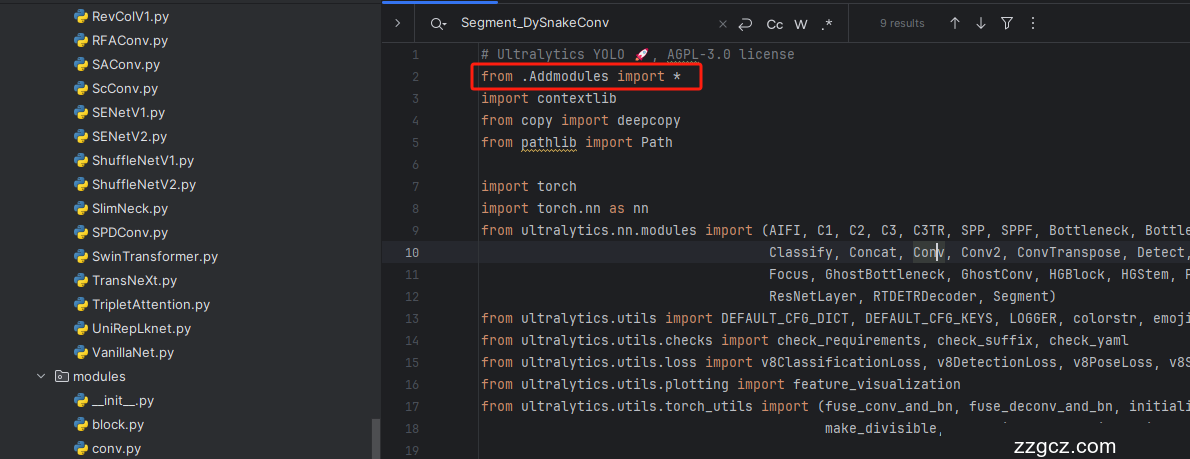

第一还是建立文件,我们找到如下 ultralytics /nn文件夹下建立一个目录名字呢就是'Addmodules'文件夹( !然后在其内部建立一个新的py文件将核心代码复制粘贴进去即可。

4.1.2 修改二

第二步我们在该目录下创建一个新的py文件名字为'__init__.py'( ,然后在其内部导入我们的检测头如下图所示。

4.1.3 修改三

第三步我门中到如下文件'ultralytics/nn/tasks.py'进行导入和注册我们的模块( !

4.1.4 修改四

按照我的添加在parse_model里添加即可。

到此就修改完成了,大家可以复制下面的yaml文件运行。

4.2 Diverse Branch Block的yaml文件和训练截图

下面推荐几个版本的yaml文件给大家,大家可以复制进行训练,但是组合用很多具体那种最有效果都不一定,针对不同的数据集效果也不一样,我不可每一种都做实验,所以我下面推荐了三种我自己认为可能有效果的配合方式,你也可以自己进行组合。

4.2.1 Diverse Branch Block的yaml版本一(推荐)

此版本训练信息:YOLO11-C3k2-DBB-backbone summary: 496 layers, 2,877,423 parameters, 2,877,407 gradients, 7.1 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

-

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

-

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2_DBB_backbone, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2_DBB_backbone, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2_DBB_backbone, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2_DBB_backbone, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

-

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 6], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2_DBB_backbone, [512, False]] # 13

-

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 4], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2_DBB_backbone, [256, False]] # 16 (P3/8-small)

-

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 13], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2_DBB_backbone, [512, False]] # 19 (P4/16-medium)

-

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 10], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2_DBB_backbone, [1024, True]] # 22 (P5/32-large)

-

- - [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

4.2.2 Diverse Branch Block的yaml版本二

添加的版本二具体那种适合你需要大家自己多做实验来尝试。

此版本训练信息:YOLO11-C3k2-DBB-neck summary: 416 layers, 2,815,199 parameters, 2,815,183 gradients, 6.7 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

-

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

-

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2_DBB_neck, [256, False, 0.25]]

- - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2_DBB_neck, [512, False, 0.25]]

- - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2_DBB_neck, [512, True]]

- - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2_DBB_neck, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

-

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 6], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2_DBB_neck, [512, False]] # 13

-

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 4], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2_DBB_neck, [256, False]] # 16 (P3/8-small)

-

- - [-1, 1, Conv, [256, 3, 2]]

- - [[-1, 13], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2_DBB_neck, [512, False]] # 19 (P4/16-medium)

-

- - [-1, 1, Conv, [512, 3, 2]]

- - [[-1, 10], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2_DBB_neck, [1024, True]] # 22 (P5/32-large)

-

- - [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

4.2.3Diverse Branch Block的yaml版本三

此版本训练信息:YOLO11-DBB summary: 416 layers, 3,471,487 parameters, 3,471,471 gradients, 9.2 GFLOPs

- # Ultralytics YOLO 🚀, AGPL-3.0 license

- # YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect

-

- # Parameters

- nc: 80 # number of classes

- scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'

- # [depth, width, max_channels]

- n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPs

- s: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPs

- m: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPs

- l: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPs

- x: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs

-

- # YOLO11n backbone

- backbone:

- # [from, repeats, module, args]

- - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- - [-1, 1, DiverseBranchBlock, [128, 3, 2]] # 1-P2/4

- - [-1, 2, C3k2, [256, False, 0.25]]

- - [-1, 1, DiverseBranchBlock, [256, 3, 2]] # 3-P3/8

- - [-1, 2, C3k2, [512, False, 0.25]]

- - [-1, 1, DiverseBranchBlock, [512, 3, 2]] # 5-P4/16

- - [-1, 2, C3k2, [512, True]]

- - [-1, 1, DiverseBranchBlock, [1024, 3, 2]] # 7-P5/32

- - [-1, 2, C3k2, [1024, True]]

- - [-1, 1, SPPF, [1024, 5]] # 9

- - [-1, 2, C2PSA, [1024]] # 10

-

- # YOLO11n head

- head:

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 6], 1, Concat, [1]] # cat backbone P4

- - [-1, 2, C3k2, [512, False]] # 13

-

- - [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- - [[-1, 4], 1, Concat, [1]] # cat backbone P3

- - [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

-

- - [-1, 1, DiverseBranchBlock, [256, 3, 2]]

- - [[-1, 13], 1, Concat, [1]] # cat head P4

- - [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)

-

- - [-1, 1, DiverseBranchBlock, [512, 3, 2]]

- - [[-1, 10], 1, Concat, [1]] # cat head P5

- - [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)

-

- - [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

4.2.2 Diverse Branch Block的训练过程截图

下面是添加了 Diverse Branch Block 的训练截图。

五、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv11改进有效涨点专栏,本专栏目前为新开的平均质量分98分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~